Social media site Instagram is tackling online bullying by launching a new intervention feature on its platform.

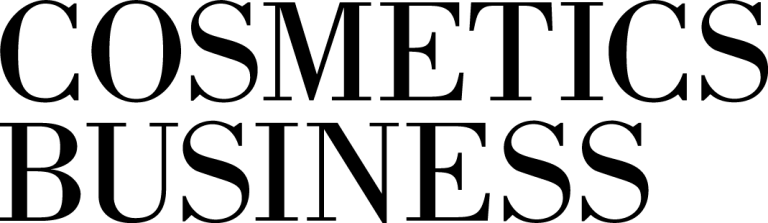

The AI powered service notifies consumers when their comment may be considered offensive before it’s posted.

This, Instagram hopes, will give users a chance to reflect and undo their comment in order to prevent hateful speech circulating the site.

The site's new intervention feature

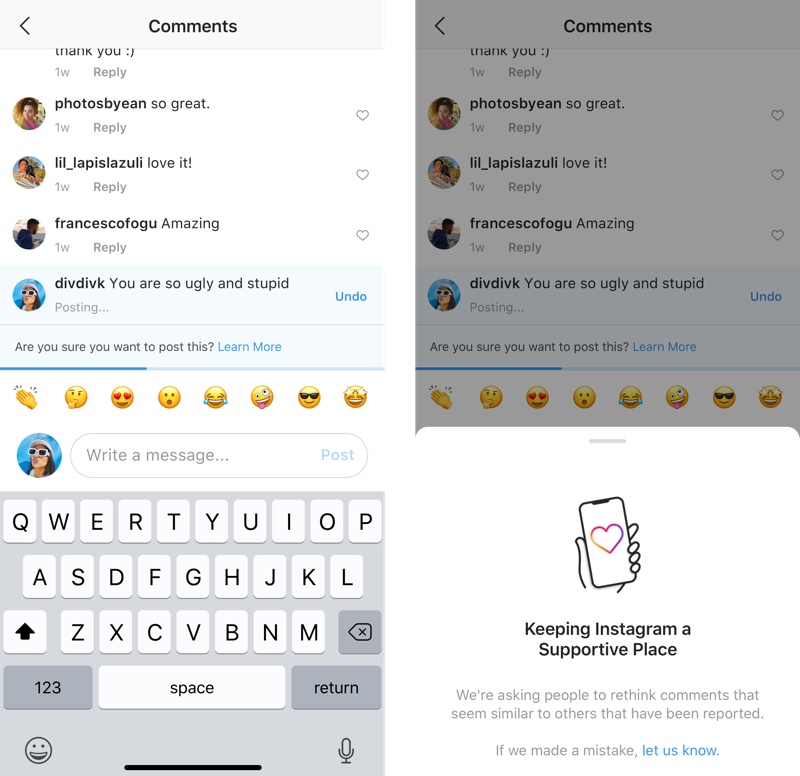

Instagram has also announced plans to launch its Restrict feature, which will allow users to ‘control their Instagram experience’.

Once a person has been ‘restricted’ their comments on the user’s posts will only be visible to them, meaning the recipient and the public will not be able to read them.

Users will also be able to approve when to make a restricted person’s comment visible.

The brand's 'Restrict' technology

In a statement the Head of Instagram, Adam Mosseri, said: “It’s our responsibility to create a safe environment on Instagram.

“This has been an important priority for us for some time, and we are continuing to invest in better understanding and tackling this problem.”

Instagram also confirmed it currently uses AI detection to expose bullying and other harmful content in comments, photos and videos.